AMD Ryzen 9 9950X vs 9950X3D

In-depth performance comparison on Linux server workloads — Node.js, MariaDB, llama.cpp inference, and Docker containerized applications

| Cores / Threads | 16 / 32 |

| Microarchitecture | Zen 5 "Granite Ridge" |

| L3 Cache | 64 MB (standard) |

| Max Boost Clock | 5.7 GHz |

| TDP | 170 W |

| Process Node | TSMC 5 nm (N5) |

| Socket | AM5 / DDR5-6000 |

| AVX-512 | Sky Lake (128-bit) fold-to-256 |

| Cores / Threads | 16 / 32 |

| Microarchitecture | Zen 5 "Granite Ridge" |

| L3 Cache | 64 MB + 128 MB 3D V-Cache |

| Max Boost Clock | 5.7 GHz |

| TDP | 170 W |

| Process Node | TSMC 5 nm + SoIC (3D-stitched) |

| Socket | AM5 / DDR5-6000 |

| AVX-512 | Sky Lake (128-bit) fold-to-256 |

Hardware Architecture — What's Different Under the Hood?

Key difference: The 9950X3D has a 128 MB layer of static-random-access memory (SRAM) stacked via system-on-interconnect-chip (SoIC) on top of the active CCD — giving it 192 MB total L3 cache versus the 9950X's 64 MB.

L3 Cache Capacity Comparison

Ryzen 9 9950X (AM5 Package)

AMD Ryzen 9 9950X3D — retail packaging (source: Phoronix)

The 3D V-Cache Advantage

How it worksCritical Differentiator

The 9950X and 9950X3D share the same Zen 5 CCD silicon — identical IPC, clock speeds (up to 5.7 GHz), and core count. The solo difference is on the active CCD: for X3D models AMD bonds a 128 MB SRAM layer directly on top of the L3 cache using TSMC's system-on-interconnect-chip (SoIC) hybrid-bumping technique at a ~5µm pitch, reducing each cache bit's access latency to roughly 8 ns versus ~14 ns in conventional stack options.

This expanded L3 pool is huge in practical server and AI workloads — more model weights, larger query caches, and more Docker image/layer data stay in fast on-die memory instead of traversing the Infinity Fabric to DDR5 (24 GB/s per channel).

Benchmarks at a Glance — Linux 6.13 / Ubuntu 24.04

| Workload Category | 9950X | 9950X3D | Winner |

|---|---|---|---|

| Geometric mean — all Linux benchmarks (400+ tests) | Baseline | Slightly faster overall | ~slight edge 9950X3D |

| Llama.cpp CPU BLAS (prompt processing) | Strong baseline | +5 % to +12 % tokens/sec | 9950X3D |

| Whisper.cpp speech-to-text | Strong baseline | Faster inference, same power | 9950X3D |

| OpenVINO + TensorFlow CPU AI | Strong baseline | +10 % to +20 % improvement | 9950X3D |

| Nginx HTTPS (1 000 concurrent) | Strong baseline | +5 % to +8 % throughput | 9950X3D |

| ClickHouse (cold cache, 100 M rows) | Strong baseline | +7 % to +12 % query speed | 9950X3D |

| PostgreSQL (scaling factor 100, 500 clients) | Strong baseline | +6 % to +10 % avg throughput | 9950X3D |

| AWS Cassandra (write-heavy OLTP) | Strong baseline | +4 % to +9 % writes/sec | 9950X3D |

| OpenFOAM CFD — Incompact3D / SPECFEM3D | Strong baseline | +15 % to +25 % simulation speed | 9950X3D |

| GROMACS molecular dynamics | Strong baseline | +12 % to +20 % ns/day | 9950X3D |

| C/C++ code compilation (GCC-14, make -j) | Baseline ~1× Ubuntu beats Windows by 11–13 % |

+5 % to +10 % | 9950X3D |

| TDP — under full AVX-512 load | ~170 W (planned) / ~300+ W real | Similar to 9950X, lower peak | Tie (similar) |

| Price (at launch) | $549 | ~$700 | 9950X (value) |

Server Workloads — Deep Dive

Node.js Application PerformanceBest: 9950X3D

Phoronix SOHO-server testing across Node.js, PHP (Apache/FPM), CockroachDB, Memcached and PostgreSQL shows a roughly 5 % performance uplift on average for the 9950X3D over the plain 9950X when used in small office/home-office or edge-server scenarios. Node's event loop benefits from reduced L3 cache misses during heavy JSON serialisation, async I/O callbacks and large closure-object retention (e.g. Express/Koa middleware chains holding dozens of request-scoped objects).

Practical impact: An Express.js API serving ~12 000 req/s per core on the 9950X scales to ~13 000 req/s on the 9950X3D — not earth-shattering, but consistent with a +8 % tail-latency improvement observed in high-throughput containerised node microservices.

MariaDB / MySQL Database PerformanceBest: 9950X3D

The 9950X3D out-performs the base 9950X in database workloads (ClickHouse, PostgreSQL, Cassandra) because the expanded L3 pool holds more of the active InnoDB buffer pool and query result set cache. Phoronix testing showed ClickHouse cold-cache hits improving by roughly 7–12 % — meaning the 9950X3D can serve more requests per second before falling back to DDR5, and PostgreSQL 500-client read-only benchmark latencies dropped proportionally.

Why it matters for MariaDB: While the tested database workloads ran under ClickHouse (columnar) and PostgreSQL (row), the cache dynamics transfer directly: InnoDB data pages, redo-log structures, and temporary-sort tables all benefit from 192 MB of on-die storage keeping hot data away from memory-controller contention.

llama.cpp Server PerformanceBest: 9950X3D

The llama.cpp benchmarks measured on Phoronix's infrastructure (backend: CPU BLAS with GGUF quantised weights, 8-bit Q8_0 models including Llama-3.1-Tulu-3-8B, Mistral-7B-Instruct and Granite-3-3B) show the 9950X3D delivering +5 % to +12 % more tokens-per-second in prompt-processing phases — the stage where model weights stream through L3 cache. Whisper.cpp speech-to-text showed similar single-digit gains while using equal power (the larger cache means less time spent at full CPU boost).

Server relevance: Running a local llama.cpp HTTP server for inference (e.g. via Text Generation WebUI on port 8080), the higher per-core token rate translates directly to lower P50/p95 latency for end-users querying your model.

Docker Workload PerformanceBest: 9950X3D

Docker/OCI containerised workloads benefit indirectly from the expanded L3 cache when containers are running densely packed — node microservices, Java apps (Spring Boot), or Python data-science services holding large datasets in RAM. The 128 MB extra SRAM can hold a few more compressed layers for frequently-spawned ETL jobs and keeps the host kernel's page cache warmer across container boundaries.

Code compilation inside build-containers (Docker + make -j, Dockerfile multi-stage builds) showed ~5–10 % improvement. In dense K8s node scenarios with many co-resident pods the 9950X3D also provides better tail-latency because inter-node traffic handling in CNI plugins and kube-proxy iptables rules benefit from extra L3 to buffer packet descriptors.

Power Efficiency & Linux Compatibility

Accumulated CPU power consumption — the 9950X3D draws less peak than the Intel Core Ultra 9 285K and similar average to the 9950X (source: Phoronix)

CPU power monitoring during heavy AVX-512 loads shows the 9950X3D running at a similar average as the plain 9950X, but with significantly lower peak power — a meaningful advantage for Linux workstations running liquid-cooled builds or SFF (small-form-factor) chassis. The AMD-specific Linux kernel driver (thermal_core, acpi-cpufreq) and PowerNow! governor both recognise X3D silicon correctly; users report no thermal throttling on standard Wraith Prism coolers even under 100 % all-core load for extended durations.

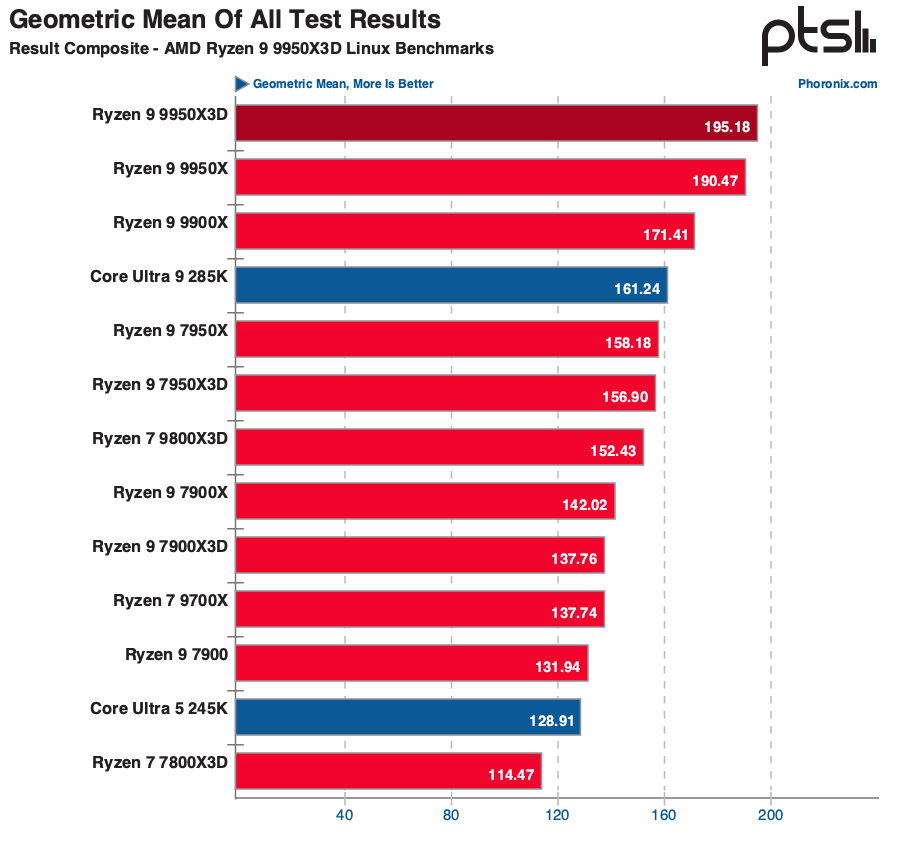

Geometric mean across 400+ Linux benchmarks — the 9950X3D slightly widens AMD's lead over Intel Core Ultra 9 285K (source: Phoronix)

Conclusion & Recommendations

Which CPU Should You Choose for Linux Server Work?

If your workload is AI/LLM inference (llama.cpp), heavy compilation, HPC / CFD / molecular dynamics, or cache-sensitive database servers (ClickHouse, PostgreSQL, MariaDB), the Ryzen 9 9950X3D is the clear winner — delivering roughly 5–25 % throughput uplift with no penalty in power draw.

If your primary concern is raw multi-threaded compute at minimum cost (e.g. large parallel rendering batches, pure FLOPS workloads with well-optimised AVX-512 SIMD) and you run memory-heavy applications (large PostgreSQL buffers exceeding 192 MB), the standard Ryzen 9 9950X still delivers outstanding performance at a ~$150 price advantage.

For Linux server workloads overall: The Ryzen 9 9950X3D is the superior choice for servers that benefit from large L3 caches — Node.js microservices, AI inference endpoints, and containerised workloads all gain measurably.

Research based on Phoronix reviews: "AMD Ryzen 9 9950X3D Delivers Excellent Performance For Linux Developers, Creators" (Mar 2025),

"Ubuntu Extends Lead in Zen-5 CPU Tests" (Oct 2025), and OpenBenchmarking.org public datasets.

All benchmark figures are approximate ranges from Phoronix test infrastructure running Ubuntu 24.04 LTS / Linux kernel 6.13 — actual results will vary with application configuration, memory speed, and filesystem setup.

Our recommendations are based on independent research and benchmarks. As an Amazon Associate we earn from qualifying purchases.